What is load testing?

All you need to know

to prevent downtime

Imagine this: You’ve just launched a feature, and traffic is spiking. Everything's riding on your system holding steady—except it doesn’t. Pages stall, services fail, and users vanish. Sound familiar?

53% of users abandon a site if it takes more than three seconds to load. Amazon estimates every 100ms of latency costs them 1% in sales. Performance isn’t just a feature—it’s a business risk.

Yet for many teams, performance remains a black box. They test so infrequently that when they finally do, the scripts are outdated, the environments are misaligned, and the results aren’t reliable.

You can't deal with a black box with another black box. Load testing is how you shine a light inside.

Types of load testing

Let's kick this off with a definition: Load testing is a performance testing technique where you simulate expected real-world user activity on an application or system to measure how it performs under normal and peak load conditions.

In simple terms:

✅ You simulate real users interacting with your app.

✅ You watch how fast, stable, and scalable it stays under pressure.

✅ You catch bottlenecks, slowdowns, or crashes before your users do.

Now picture a live event, a quiet Sunday, and a viral launch—each needs its own kind of test.

Different types of load testing serve different objectives. Just keep in mind that things like your test duration can vary based on your requirements.

Here’s an abridged overview to help you match the right test type to your use case:

| Test type | Purpose | Typical load | Duration | Use case | What it reveals |

|---|---|---|---|---|---|

| Load test | Measure performance under expected load | 100% of average load | ~30 minutes | Development cycles, regression testing | Response time, error rate, bottlenecks |

| Soak test | Detect long-term degradation and memory leaks | 100% of average load | 24–72 hours | Pre-release validation, stability checks | Memory leaks, performance drift |

| Peak test | Ensure stability during high-traffic periods | 100% of peak load | ~1 hour | Product launches, marketing spikes | Latency, error resilience |

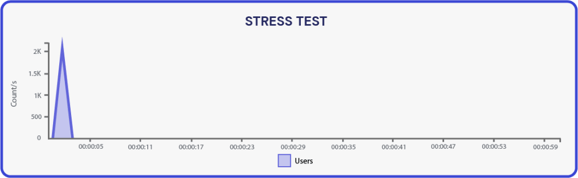

| Stress test | Push system beyond limits to find failure points | 150–200% of peak load | 2–3 hours | Scalability planning, failover analysis | Resource exhaustion, crash behavior |

| Spike test | Test sudden traffic surges and system recovery | 300% of average load (burst) | ~30 minutes | Unexpected user surges, feature drops | Crash recovery, auto-scaling issues |

| Capacity test | Identify max supported concurrent users | Gradually increasing load | Until failure | Infrastructure planning, load threshold mapping | Max throughput, saturation points |

| Volume test | Validate system behavior with massive data volumes | Average VUs, high data load | Several hours | Big data jobs, DB migrations | Data integrity, storage slowdowns |

| Smoke test | Verify basic system performance before deeper testing | Very low VUs | 15–30 minutes | Sanity check before large-scale tests | Broken flows, setup/config issues |

Load testing vs stress testing

Although the terms are often used interchangeably, load testing and stress testing serve different purposes in performance testing. Both are pressure tests. One checks if it holds, the other when it breaks.

Understanding their differences—and using them together—is critical for building resilient, scalable applications.

- Load testing measures system performance under expected user load. It helps you validate that your application meets performance requirements in typical scenarios.

- Stress testing pushes your system beyond its peak load to identify breaking points and assess how gracefully it fails and recovers.

| Feature | Load Testing | Stress Testing |

|---|---|---|

| Goal | Validate system under expected load | Determine system limits under extreme load |

| Traffic volume | 100% of expected traffic | 150–200% or more of peak traffic |

| Focus | Response time, throughput, error rate | Failure behavior, resource exhaustion |

| Use case | Continuous monitoring, feature rollouts | Pre-launch, infrastructure scaling tests |

| Outcome | Performance baseline & capacity planning | Failure tolerance & recovery strategies |

| Test result usage | Optimization and regression detection | Fault diagnosis, stability improvements |

When does load testing make sense?

Think of it like a smoke alarm: better to test before the fire.

Load testing is important at every stage of the development lifecycle. Shift-left load testing is about putting performance first in your CI/CD pipeline. It enables continuous performance assurance, greater confidence in deployments, and better user experiences.

It’s not just for post-QA—it’s a must-have for:

- Early detection of bottlenecks: Developers can catch slow database queries, memory leaks, or poor concurrency handling before code reaches staging.

- Faster feedback loops: Automated load tests triggered on code commits ensure performance issues are identified within minutes—not days.

- More reliable releases: With regular performance tests baked into each deployment, teams avoid regressions and reduce the risk of performance issues in production.

- Lower costs: Fixing a performance issue early in development is far less expensive than addressing it after go-live.

What kind of companies can benefit from testing?

Load testing is critical for any organization that delivers digital services to a large user base, faces performance-sensitive use cases, or operates within high-stakes environments where downtime is costly.

No matter what you’re doing. Deploying a new feature, migrating infrastructure, or preparing for seasonal surges, a robust load testing strategy helps ensure your systems scale, respond quickly, and deliver consistently reliable experiences.

From fintech to fashion, here’s where load testing matters most:

Software & SaaS

Engineering-driven organizations depend on backend reliability and CI/CD speed. Load testing tools validate APIs, microservices, and ensure seamless deployments, enabling developers to spot performance regressions early.

- Test each service independently before integration

- Validate performance of new features in test environments

- Ensure that development velocity doesn't come at the cost of application performance

Broadcasting & streaming

These platforms deal with massive, simultaneous user interactions—especially during live events. Distributed load testing simulates global user bases and evaluates how well video streams perform under heavy load.

- Assess buffering delays and latency in real time

- Validate content delivery networks (CDNs)

- Ensure uptime during breaking news or sports events

Finance & fintech

Milliseconds matter when money is on the line. Load testing ensures trading platforms, banking APIs, and authentication systems stay responsive and secure under concurrent users.

- Prevent slow transaction times and bottlenecks

- Ensure compliance by testing system stability and logging under load

- Simulate peak events like earnings reports or market volatility

Ticketing & events

Traffic spikes are the norm during ticket releases. Stress testing and spike testing are essential to validate queueing systems, payment flows, and backend services.

- Simulate flash-sale conditions with thousands of concurrent users

- Ensure checkout and payment gateways don’t fail under pressure

- Load test integration points with CRM and inventory systems

Telecom

Telecommunication services are infrastructure-dependent and user-heavy. Load testing validates high-throughput systems such as customer portals, self-service apps, and billing engines.

- Test data provisioning and plan activation workflows

- Measure performance across multiple locations and networks

- Identify and eliminate latency in call or data service APIs

Retail & e-commerce

Load testing is vital for avoiding downtime during seasonal sales and promotions. Customers expect seamless experiences across checkout, inventory, and payment flows.

- Simulate holiday shopping traffic or influencer campaigns

- Test discount codes, loyalty program integrations, and cart behavior

- Validate third-party service interactions (payment, shipping, reviews)

Government services

Public sector applications must support fixed-time peaks (e.g., tax filings, school admissions). These services must be accessible and responsive across user devices.

- Validate identity verification, form submissions, and data uploads

- Test for accessibility under concurrent use (ADA compliance)

- Ensure security and reliability during civic deadlines

Healthcare & healthtech

Applications like telemedicine, patient portals, and health record systems require zero downtime and high compliance.

- Simulate concurrent user traffic for virtual consultations

- Validate EMR system performance under regulatory load requirements

- Ensure resilience during vaccine drives or emergency updates

Gaming & digital entertainment

For online gaming platforms and streaming content services, performance is part of the experience.

- Test matchmaking services under varying loads

- Simulate download spikes for new releases or patches

- Ensure seamless transitions between media assets or levels

Pros and cons of load testing

Load testing plays a crucial role in software testing and performance engineering, but like any tool or technique, it has trade-offs.

Understanding both the benefits and limitations can help teams better plan their testing strategies.

Pros

- Improves system performance: By identifying bottlenecks and optimizing response times, load testing enhances overall application speed and reliability.

- Prevents downtime: Catching issues before they hit production reduces the risk of outages under user load.

- Supports scalability planning: Knowing how your application behaves under various load conditions informs decisions about infrastructure scaling.

- Enhances user experience: Fast, responsive applications lead to higher customer satisfaction and retention.

- Validates third-party integrations: Tests the reliability of external services (e.g., payment providers, APIs) under pressure.

- Increases release confidence: Regular testing in CI/CD pipelines ensures that performance regressions are caught early.

- Reduces costs long-term: Early detection of performance issues avoids expensive fixes post-deployment.

Cons

- Setup complexity: Accurate load testing requires a well-prepared environment that closely mirrors production, which can be time-consuming.

- Tooling and infrastructure costs: High-scale tests might require commercial tools or cloud infrastructure that adds to costs.

- Test realism challenges: Simulating realistic traffic, behavior, and data across geographies and device types can be difficult.

- Skill requirements: Effective load testing requires specialized skills in scripting, monitoring, and analysis.

- False positives/negatives: Inaccurate configurations or unrealistic test scenarios may lead to misleading results.

Load testing best practices

A successful load testing strategy isn’t just about running test scripts—it’s about having a repeatable, realistic, and insightful process that aligns with your development and release workflows.

Whether you're optimizing for system performance, preventing downtime, or meeting SLAs, following best practices ensures your efforts translate into meaningful results.

Here’s how to make your load testing not just work—but work smarter:

- Start with the user perspective: Define the critical user journeys and actions that matter most to your business.

- Define clear KPIs: Set performance metrics upfront, such as response time <2s, throughput targets, and acceptable error rates.

- Validate your test environment: Ensure it mirrors production as closely as possible to maintain data integrity and accuracy.

- Ramp up incrementally: Gradually increase user load to simulate ramp-up behavior and observe how the system reacts.

- Simulate real-world traffic: Align load test scenarios with expected user load, traffic patterns, and historical usage data.

- Use test automation: Automate tests within your CI/CD pipeline to maintain repeatability and consistency.

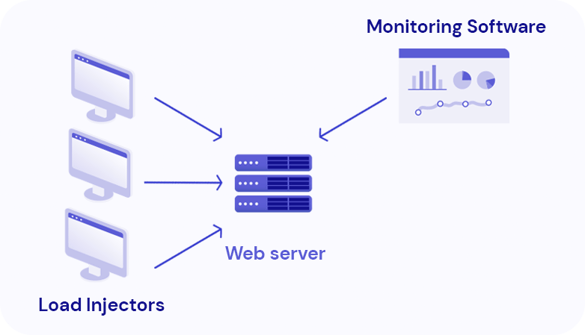

- Monitor and observe: Use performance monitoring tools to analyze test results, identify bottlenecks, and correlate them with infrastructure behavior.

- Analyze and act: Don’t just collect test data—use it to optimize configuration, tune systems, and inform engineering decisions.

- Repeat regularly: Performance testing should be continuous, not occasional. Integrate it into your development and deployment lifecycle.

How to start load testing

Getting started with load testing doesn't have to be overwhelming. Whether you're testing a new feature rollout or preparing for an influx of concurrent users, starting with a structured, strategic approach makes all the difference.

Here’s a seven-step framework to get from zero to insights, fast:

- Define KPIs: Focus on throughput, error rate, and response time. Set benchmarks to measure system performance against real-world expectations.

- Identify core flows: Determine which user journeys—such as logins, checkouts, or form submissions—are critical to your application’s success.

- Select a testing tool: Choose a load testing tool like Gatling based on your stack, team experience, and scalability needs.

- Create test scripts: Write test scripts that accurately simulate user behavior, covering different endpoints, sessions, and concurrency patterns.

- Run tests iteratively: Start small, analyze initial results, and scale incrementally. This helps isolate bottlenecks before they impact more complex tests.

- Analyze and optimize: Use performance test results to identify and address bottlenecks, latency issues, and system inefficiencies.

- Automate: Embed load testing into your CI/CD pipeline to ensure ongoing performance validation with every code change or release.

How to interpret a load test

You’ve got the results—now let’s decode the story they tell.

Performance metrics are more than just numbers—they reveal how your system responds under pressure, where it's strong, and where it needs optimization.

Here's how to make sense of the most important performance metrics:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Response Time | The time it takes for the system to respond to a request | Directly affects user experience; higher response time signals bottlenecks |

| Throughput | The number of transactions or requests processed per second | Indicates the system’s capacity to handle load efficiently |

| Error Rate | The percentage of failed transactions or requests | Helps identify failures due to server crashes, timeouts, or overload |

| Concurrent Users | The number of users simulated at the same time | Shows how well your system supports multiple simultaneous interactions |

| CPU & Memory Usage | The amount of system resources consumed during the test | Detects resource exhaustion or imbalance in application components |

| Latency | The time delay between request initiation and data receipt | Crucial for real-time services; spikes in latency may impact responsiveness |

| Peak Load Handling | How the system behaves at the highest traffic point | Reveals system limits and helps in capacity planning |

| Recovery Time | Time taken to return to normal after a load spike or failure | Assesses system resiliency and auto-scaling capabilities |

When analyzing your test results, compare metrics against your predefined KPIs. Look for patterns: Are errors spiking at high concurrency? Is response time degrading over time (soak test)? Are resource metrics flatlining before failure (stress test)?

Visual dashboards, APM tools, and historical baselines can make this analysis easier and more actionable.

Why open-source tools fall short at scale

Tools like Gatling open source are great starting points, but as system complexity grows, they fall short. Challenges include:

- Manual test environment configuration

- Limited support for distributed load testing

- No real-time test result dashboards

- High DevOps overhead

When testing becomes mission-critical, teams need a scalable, automated, and observability-ready solution. Gatling Enterprise removes the heavy lifting, letting teams focus on what matters: building resilient, high-performing applications.

Redefine load testing with Gatling

Load testing has evolved from a basic checkbox in the development cycle to a strategic pillar for delivering resilient, high-performing digital experiences. In the era of shift-left testing, Gatling stands out as the premier alternative, not just for its technical prowess, but for how it fundamentally reimagines what load testing can achieve.

That’s where Gatling comes in.

Gatling reimagines load testing for the era of shift-left, DevOps, and real-time collaboration. It turns performance testing from an isolated final step into a continuous, code-driven practice teams actually rely on.

With Gatling, you get:

- Seamless integration with CI/CD pipelines for automated, early performance validation

- Real-time, interactive reporting to catch bottlenecks while tests run

- Distributed load generation across public, private, or hybrid infrastructures

- Support for modern protocols like HTTP, WebSocket, MQTT, and more

- An open-source core backed by an active, innovative community

Ready to experience the difference?

Book a demo of Gatling Enterprise and see how our platform transforms API load testing, distributed testing, and performance automation.

Prefer to explore first? Get started with our documentation and open-source resources today.

Share this

You May Also Like

These Related Articles

Load testing vs. performance testing: How to start with Gatling

What's the best solution to integrate load testing to a CI/CD pipeline?