Load Testing And Microservices Architecture

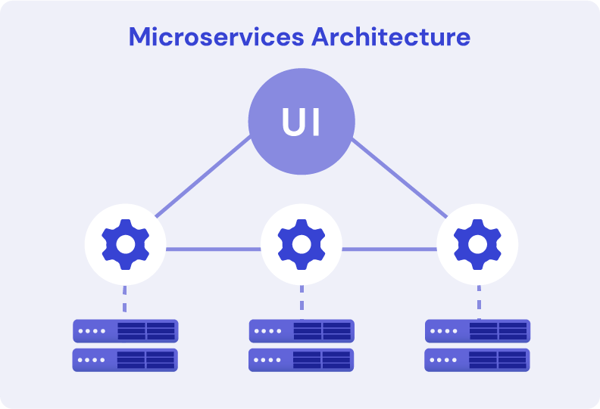

A huge proportion of modern software architecture revolves around the creation of microservices. The microservice approach to software development sees each software component isolated into its own independent service. Since each software component developed as a microservice is independent of other microservices, adding new functionalities or scaling these services is easier when compared to more traditional monolithic software development models.

Although microservices have obvious advantages when it comes to deploying and scaling applications, there are some significant challenges that we also need to be aware of and overcome – particularly around our approach to load testing of microservices.

What is load testing in microservices? You can incorporate load testing of individual microservices in isolation to test their scalability, reliability, and responsiveness without much issue. However a fully functioning software application is typically made up of multiple different microservices that work together in tandem. Load testing individual microservices is not sufficient to guarantee that our application will perform to the expected standard in production, a specific load testing strategy for microservices is required.

How then should we approach load testing all these different microservices? That’s the question we will be addressing in this article.

Understanding Microservices

What Are The Key Characteristics and Benefits Of Microservices?

Before we dive into the best approaches for load testing microservices, let’s first establish some of their key characteristics and subsequent benefits:

Simultaneous development – thanks to distributed development of different parts of an application through microservices, multiple microservices can be created simultaneously. This has the advantage of speeding up development time and enabling teams to get a working version of an application delivered more quickly.

Clearly defined architecture – by breaking down large applications into small microservices they become easier to understand and be worked upon. This modular approach also has the advantage of making an application’s implementation easier and more efficient.

Increased resilience – if a software application follows a microservice architecture, then any failure in one single microservice is unlikely to take down the entire application. Since each microservice runs in isolation, it’s possible that an application can remain functional even if one or more of its microservices are experiencing failure.

Better scalability – if whilst running an application in production it becomes clear that particular microservices are becoming bottlenecks or require additional resources, those individual microservices can be scaled and adjusted accordingly. These bottlenecks are more difficult to overcome for monolithic applications.

What Are The Three Types of Tests For Microservices?

To realize the advantages of microservices as described above, we must implement a robust testing strategy. We can follow the traditional test pyramid model to identify the three types of tests for microservices we are primarily concerned with:

- Unit – tests that fall under this category relate to testing the microservice in isolation from other microservices. These tests will be run early and often in the development lifecycle and will typically be executed as part of CI/CD automation.

- Integration – this area of testing covers the verification that a microservice can successfully interact with other related microservices. We might use service utilization to achieve this (more on that further below in this article) or even have actual versions of the dependent services deployed and running in the test environment that can be called.

- E2E – these tests cover the entire user journey of the application, utilizing all relevant microservices. End-to-end tests are typically performed through a UI or by triggering the API calls corresponding to a user journey in the expected order.

Strategies for Performance Testing Microservices

When it comes to load testing microservices, there are a few considerations that we need to be aware of and plan for upfront.

Ensure Sufficient Monitoring Is In Place

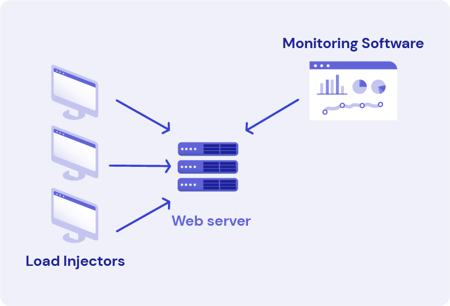

The first thing we need to address is that the test environment hosting our microservice has sufficient monitoring in place. Whilst a load testing tool like Gatling will be able to provide detailed information about the request and response times of the transactions from our service, we need additional visibility on how our application is performing in regard to resource utilization.

For this, we need to have an Application Performance Monitoring (APM) tool configured. You measure microservice performance by configuring your APM tool to monitor resource utilization metrics such as CPU, memory, and disk I/O whilst there is traffic running through your microservices. Additionally, it can be helpful to monitor other metrics like reading and writing to message queues and memory caches.

Measuring the performance of microservices has to go beyond simply analyzing the request and response times from HTTP calls to the service. Visibility of resource utilization in the environment the application and microservices are running in is essential.

Focus Testing On High-Risk Microservices

Whilst it’s crucial to conduct load testing of all the microservices that support our application, we don’t want to find ourselves in a position where long-running test executions are slowing down the development process.

We mitigate this by identifying the highest-risk microservices in our application and starting load testing of these microservices early in the development cycle. We can leave load testing of lower-risk microservices till later.

For example, if we are creating an e-commerce application, one microservice might be responsible for the checkout and purchase process. If this microservice were to fail, then it would prevent the customer from completing their order and would result in lost revenue. It could, therefore, be worthwhile investing time in load testing this critical microservice in the early stages of development, even if it meant longer test executions or CI/CD build times.

Use Service Virtualization

Any single microservice will typically have dependencies on multiple other microservices to function correctly. During the early stages of application development, when multiple microservices are typically being created in parallel, one or more of these dependent microservices is likely to be unavailable or incomplete.

We can overcome this obstacle by taking advantage of service virtualization. You can think of service virtualization as being similar to mocking the calls to another microservice. With service virtualization in place, we can focus our efforts on testing the service we are most interested in without relying on the availability of other dependent microservices in our test environment.

Use Multiple Runtime Environments

Relying on a single runtime environment can quickly become a major bottleneck for load-testing microservices. Firstly, load test execution can take a long time to complete. We must avoid slowing down or delaying application development simply because we have multiple load tests pending and only a single environment to run them on.

Secondly, it’s not uncommon for application performance to vary when it’s deployed in different geographical locations. For example, an application deployed into a US-based data center could experience vastly different transaction response times compared to the same application deployed in a data center in Asia. Ideally, we want to ensure that the geolocation of our runtime environment used for testing matches the runtime environment that will be used in production.

We also need to ensure that the actual traffic we send to our application originates from realistic destinations. For example, if we create a public-facing application hosted on the internet, we will likely receive traffic from all over the world. We must use a distributed load testing environment, with load test injectors deployed in multiple different geographical locations, to simulate an accurate load test.

Take Time To Establish Service Level Agreements (SLAs)

It’s surprisingly common for load testing of microservices and the applications they support to begin without any service level agreements (SLAs) in place. Without these SLAs, the team responsible for load-testing microservices has to rely on guesswork to determine whether or not the transaction response times of a service are acceptable.

We can avoid this problem by taking the time to bring microservice developers and product management together to establish acceptable levels of performance. We typically establish SLAs for measuring microservice performance by defining the expected transaction response times and error rates for the service.

The SLAs defined from this process don’t need to be rigid or exact. Still, they give everyone involved in the development of the application and its corresponding microservices an indication of what the acceptable level of performance is.

Measure Microservice Performance Over Time

The development of microservices is fast-paced, typically with multiple different builds and versions of any one service created over a short period of time. It’s therefore essential to track the performance levels of the service whilst it’s being developed so we can determine if any recent code changes have resulted in performance degradation

We achieve this by starting load testing of our high-risk microservices early, with load testing integrated into the CI/CD pipeline. We also need a way to quickly compare the performance of different builds and versions of our microservice. Gatling Enterprise solves this problem for you by allowing you to compare executions of any load test easily.

Consider Testing Multiple Microservices Together

Although microservices are designed to function independently, they typically rely on working together to implement end-to-end functionality of applications. When you have such microservices in your domain, it can, therefore, be valuable to load test those microservices simultaneously.

This can be accomplished by executing realistic load tests against multiple different microservices in parallel. Gatling supports concurrent execution of different load test scenarios as standard, all within the same single load testing script. Whilst the load test is executing, we can analyze the performance of the different microservices by utilizing the monitoring tools described below.

Tools and Technologies For Performance Testing Microservices

Successful load testing of microservices hinges on the use of the correct tools. We first need a load testing tool that is capable of simulating real-world scenarios effectively. Assuming that we are load-testing an application that will receive traffic from multiple geographic locations, the tool must also support distributed load generation. It should be easy to integrate into our CI/CD pipeline.

These requirements make Gatling a perfect choice for your microservices load testing tool. Gatling can generate huge levels of HTTP traffic from minimal load injector resources. A Gatling load test environment distributed worldwide can be spun up with a few clicks. The load test environment resources are automatically torn down once the test completes – meaning you are only charged whilst the load test is executing.

Beyond tools that generate load, we must also ensure we have a suitable APM tool configured to monitor the microservices running in our test environment. A tool like Gatling will be able to provide detailed information on the request and response times of the transactions to our microservices. Still, an APM tool is required so that we can dig into the resource utilization metrics of the hardware the application is running on and pinpoint any problems or bottlenecks.

A few popular tools that you might consider for your APM requirements are:

All the APM tools listed above should meet your requirements for monitoring microservice performance. Still, it’s worth installing a trial version of any of these tools to verify that it meets your expectations.

Summary

In this article, we’ve explored the advantages and challenges of microservice architecture and highlighted the need for robust load testing. We’ve identified some of the primary considerations to address when it comes to load-testing microservices and given suggestions for how these obstacles can be overcome.

There can be no shortcuts when it comes to performance testing microservices. Simply focusing load testing on the high-risk services is insufficient because these components depend on other microservices to function. Planning a microservice load testing strategy following the principles outlined in this article is the appropriate way to mitigate this.

Gatling Enterprise enables the deployment of distributed load-testing infrastructure in the cloud or on-premises with just a few clicks. In addition to monitoring test results with a detailed dashboard in real-time, Gatling Enterprise offers several other significant features that will bring success to your microservice load testing efforts.

Share this

You May Also Like

These Related Articles

Gatling introduces a gRPC plugin for load testing

What is Load Testing?